UX 3.0: Complete guide to best practices, technical aspects, and evolution

March 06, 2026

For decades, digital design was structured around the interface. Screens, flows, and journeys organized the logic of the experience, and optimizing meant reducing friction and increasing efficiency. Performance was measured through conversion, visual clarity, and task completion time. The center of gravity was located on the visible surface of the product.

This model begins to reveal its limits when intelligence starts to operate beyond the screen. Systems connect devices, process data in real time and automatically adjust behaviors. The experience is no longer only graphical interaction and becomes dependent on invisible architectures, algorithmic decisions and systemic integration. What sustains the product is no longer only layout, but the logic that articulates context, data and automation.

It is within this structural shift that UX 3.0 emerges. More than a visual trend, it signals a change in the unit of design, the metrics used to evaluate experience, and the responsibility of design.

In this article, we analyze how UX 3.0 redefines scope and governance, and how technologies such as generative AI, multimodality and contextual intelligence begin to structure experiences in an increasingly automated market.

Talk about the design of your project with a Dexa specialist

What defines UX 3.0?

UX 3.0 is the evolution of design centered on interfaces into a model oriented toward intelligent, contextual and adaptive ecosystems. The unit of design is no longer the screen or the linear journey, but becomes the system capable of integrating data, interpreting situational signals and executing decisions in real time. The experience is structured as a distributed architecture, connecting devices, APIs, recommendation engines and algorithmic models that operate continuously.

Within this paradigm, interaction is not limited to the explicit command of the user. The system uses variables such as behavioral history, location, usage patterns and probabilistic inference to anticipate needs and prioritize actions. Personalization becomes predictive and context-driven, requiring a clear definition of levels of autonomy, criteria for explainability and mechanisms of reversibility. Design begins to model algorithmic behaviors, and not only navigation flows.

In addition, UX 3.0 incorporates multimodality as an integrated architecture, combining visual interfaces, voice, sensors, biometrics and immersive environments. The discipline assumes a strategic role in data governance, bias mitigation, and the definition of guardrails for AI systems.

Traditional usability and conversion metrics become insufficient. They are complemented by indicators such as perceived trust, cross-device coherence and contextual precision, reflecting the systemic maturity of the experience.

You may also be interested in:

7 types of usability testing and a guide on how to apply them

Why UX analysis is strategic for your digital growth

UX Maturity: the path to reducing rework and accelerating innovation

The evolution of UX scope

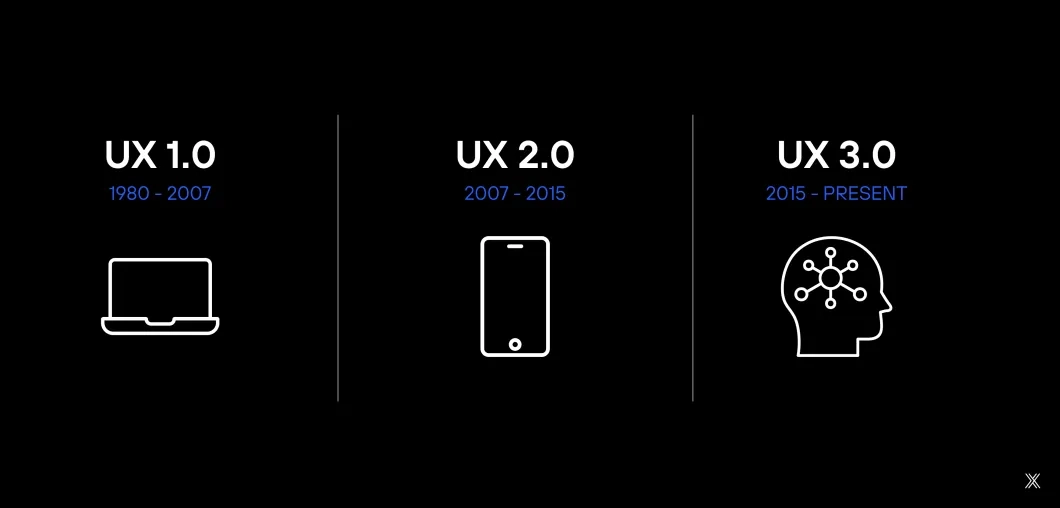

The transformation of UX 3.0 only becomes evident when we analyze how the scope of the discipline has evolved over the last decades. Each phase expanded the field of action of design, shifting the center of gravity of the experience.

UX 1.0: the desktop era

The challenge was essentially functional. The priority was usability, visual consistency and information organization. Design structured hierarchies, menus, navigation patterns and interaction rules. The dominant metric was efficiency, measured through execution time, error rate and clarity of the interface.

UX 2.0: the mobile era

The scope expanded beyond the isolated interface. The experience began to consider flow, emotion and continuity across channels. Qualitative research, personas, responsive design and omnichannel thinking became part of the discipline. Metrics such as engagement, retention and conversion became central.

UX 3.0: the algorithmic era

The object of design is no longer the interface or the journey. It becomes the systemic environment oriented by data. The experience is shaped by algorithms, integrations between devices, contextual variables and inference models. Design begins to structure intelligent behaviors and relationships between systems, not only navigation flows.

The implication is strategic: the experience can operate invisibly, distributed between physical and digital devices, articulating voice, sensors, spatial interfaces and autonomous agents. Performance begins to incorporate contextual relevance, algorithmic predictability and systemic trust.

A concrete example of UX 3.0 is a financial application that does not wait for the user to open the app to act. By identifying consumption patterns, payment calendars and historical behavior, the system anticipates a possible cash flow imbalance and automatically suggests the best reorganization of payments.

The recommendation appears on the smartphone, can be confirmed by voice through the home assistant, and is instantly reflected on the corporate desktop. If the user rejects the suggestion, the model adjusts its future inference.

In this scenario, the experience does not exist in the isolated screen, but in the intelligent orchestration between data, devices and automated decisions. Design did not simply draw a payment flow. It structured levels of autonomy, criteria for explainability and mechanisms of reversal.

It is this invisible coordination layer that characterizes UX 3.0 as a structural transformation, and not merely an aesthetic evolution.

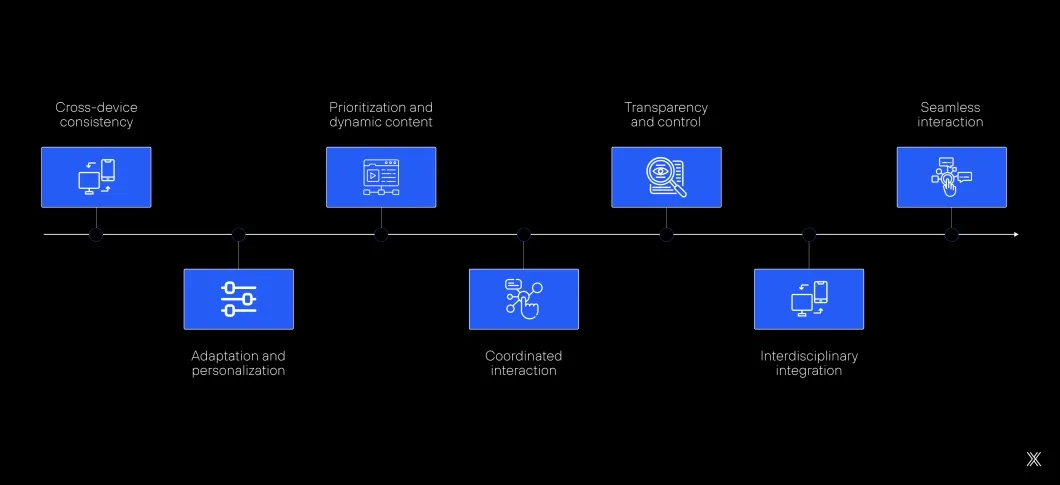

Key structural aspects of UX 3.0

As we have seen, UX 3.0 operates across multiple layers simultaneously: interface, decision logic, technological infrastructure and algorithmic models. The experience results from the coordination between devices, services, APIs, recommendation engines and real-time data flows. For this to happen, several mechanisms must operate together.

Ecosystem as the operational unit of experience

In UX 3.0, the coherence of the experience depends on the ability to maintain consistency across devices, states and digital identities. Smartphones, desktops, voice assistants, wearables and immersive environments share data and operational context.

This implies working with cross-device state synchronization, interoperability through APIs, semantic standardization and integration between AI-based systems and traditional systems. The unit of analysis becomes the ecosystem as an infrastructure for continuous interaction.

AI as infrastructure for decision and adaptation

Artificial intelligence assumes a structural role in shaping the experience. Recommendation engines, language models, probabilistic inference and personalization systems operate continuously on behavioral and contextual data.

Design acts in the definition of algorithmic decision parameters, limits of autonomy, fallback criteria and points of human intervention. Principles such as explainability, auditability and operational predictability become central, because user trust depends on clarity about how recommendations and automations are generated.

Context-driven experience and intention modeling

UX 3.0 incorporates contextual variables as part of the core logic of interaction. Location, usage history, behavioral patterns, device signals and situational data feed inference models that influence content organization and the prioritization of actions.

Personalization is supported by intention modeling and real-time predictive analysis, requiring careful calibration between automatic adaptation and the legibility of the experience. The system needs to be efficient without becoming opaque.

Multimodality as an integrated architecture

The experience integrates multiple modes of interaction: natural language, voice recognition, gestures, environmental sensors, biometrics and three-dimensional environments such as AR and VR. Multimodality requires semantic coherence across channels and synchronization between input and output modalities.

Design must consider latency, cognitive ergonomics, multimodal accessibility and consistency of feedback. Visual, auditory and spatial interfaces operate as coordinated layers within the same architecture, sensitive to the physical environment and situational conditions.

Data governance, ethics and human-centered AI

With systems executing automated inferences and decisions, the experience incorporates requirements for bias mitigation, decision traceability and granular control of data. Algorithmic transparency becomes a functional component of the interface.

Design must structure granular consent mechanisms, data visibility dashboards, reversibility of automations and clear communication about decision criteria. The paradigm of human-centered AI becomes consolidated, in which intelligence operates within limits that are understandable and adjustable by the user. Trust is not an implicit attribute; it is the consequence of well-designed governance.

Redefining the role of the UX professional

The UX professional begins to operate at the intersection of design, engineering and data science. Competencies in system architecture, AI literacy, the ability to read data flows and systemic thinking become increasingly necessary.

The strategic scope expands to include the definition of algorithmic guardrails, trust metrics, criteria for adaptation and the design of experiences partially generated by intelligent models. UX stops operating exclusively on the visual layer and begins to influence the behavioral infrastructure of the product.

Human–system collaboration in real time

The relationship between users and technology evolves toward a collaborative model. Assistants, copilots and intelligent agents operate as cognitive extensions, learning from interactions and dynamically adjusting behaviors.

The experience becomes partially co-produced during use. Design must structure persistent feedback, the possibility of correction and balance between computational autonomy and human control. The traditional command-response logic is replaced by continuous interaction mediated by adaptive intelligence.

Latest posts:

From Figma to Drupal: best practices for creating components in Drupal Canvas with AI

N8n workflows: how they work, architecture and comparison

Drupal Canvas and the new phase of low-code in Drupal CMS 2.0

Intuitive design: usability, efficiency and organic positioning

UI frameworks: 8 options for 2026 and how to choose the best for your project

Discover the main web design services and how to choose a partner